We can imagine how the world works in our heads all the time. It doesn’t matter what you imagine is real, hypothetical, or remembered. Our mind creates mental sketches, which are our internal representation of external reality.

Whether evolution or God gets the credit for this human ability depends on who you ask. Either way, we didn’t earn it. It just comes naturally to us. However, for computers to model messy real-world situations is a colossal task.

That’s why researchers and physical AI services are increasingly focusing on world models. They provide AI solutions with an intuition about how reality works, pretty similar to humans.

In this blog, we’ll explore the exciting role of world models in creating futuristic physical AI.

Craik and his mental model

Kenneth Craik was a Scottish psychologist who is recognized as one of the pioneers of modern cognitive science. He died at just 31 in a bicycle accident, but packed an extraordinary amount of thinking into a short life.

In 1943, he published his big idea of mental models in his book The Nature of Explanation. Craik suggested that the mind works by building small-scale models of reality inside itself to anticipate events.

This simple, yet profound idea is the precursor to many modern AI solutions.

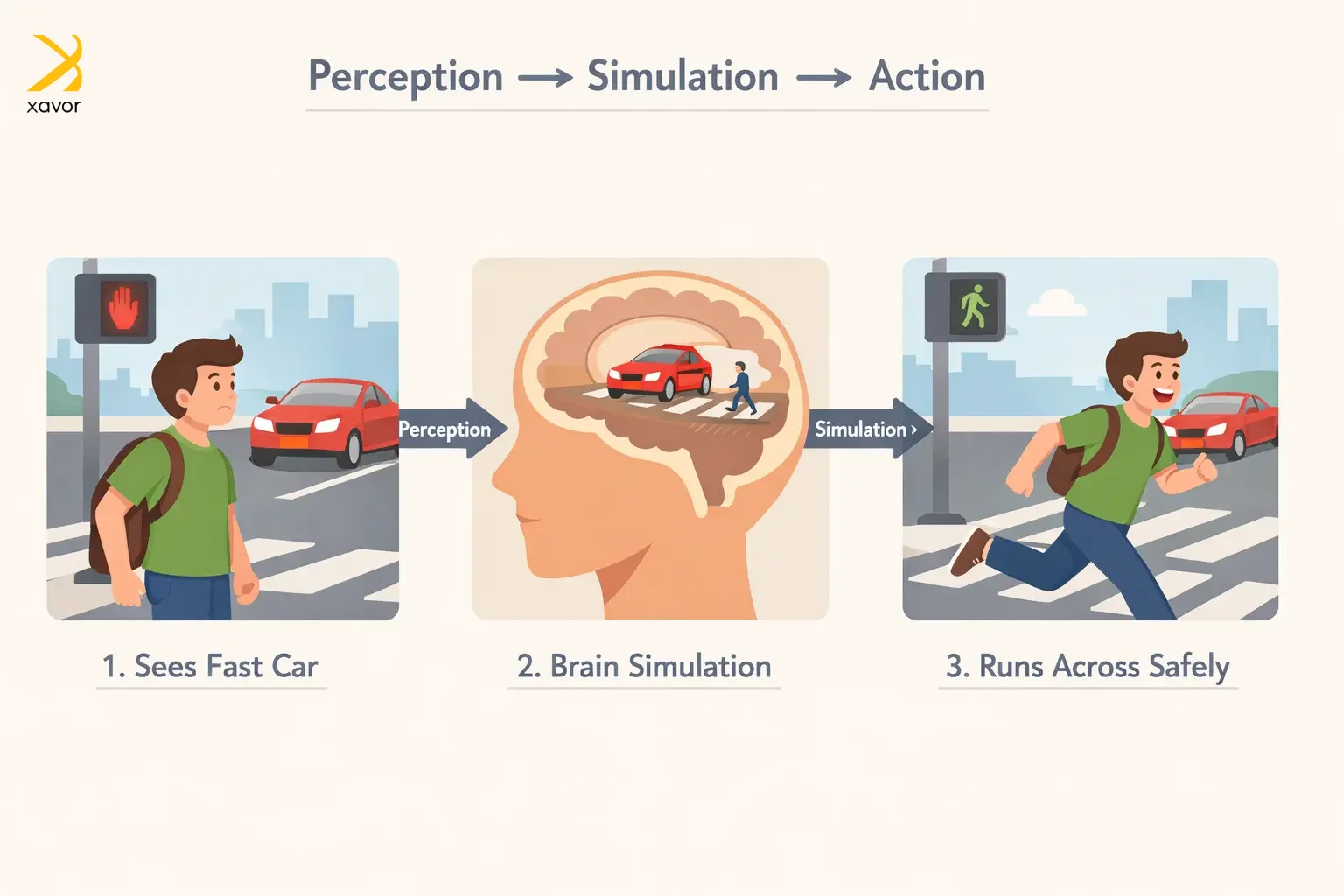

For example, when you imagine you’re about to cross a busy road, your brain quickly simulates that the car is fast. So, you run instead of walking to avoid being hit. You’re not calculating physics equations. You’re using a mental model of how cars and people behave.

What are world models in AI?

World models apply the same principle as Craik’s mental models, so AI can predict and act based on the laws of reality.

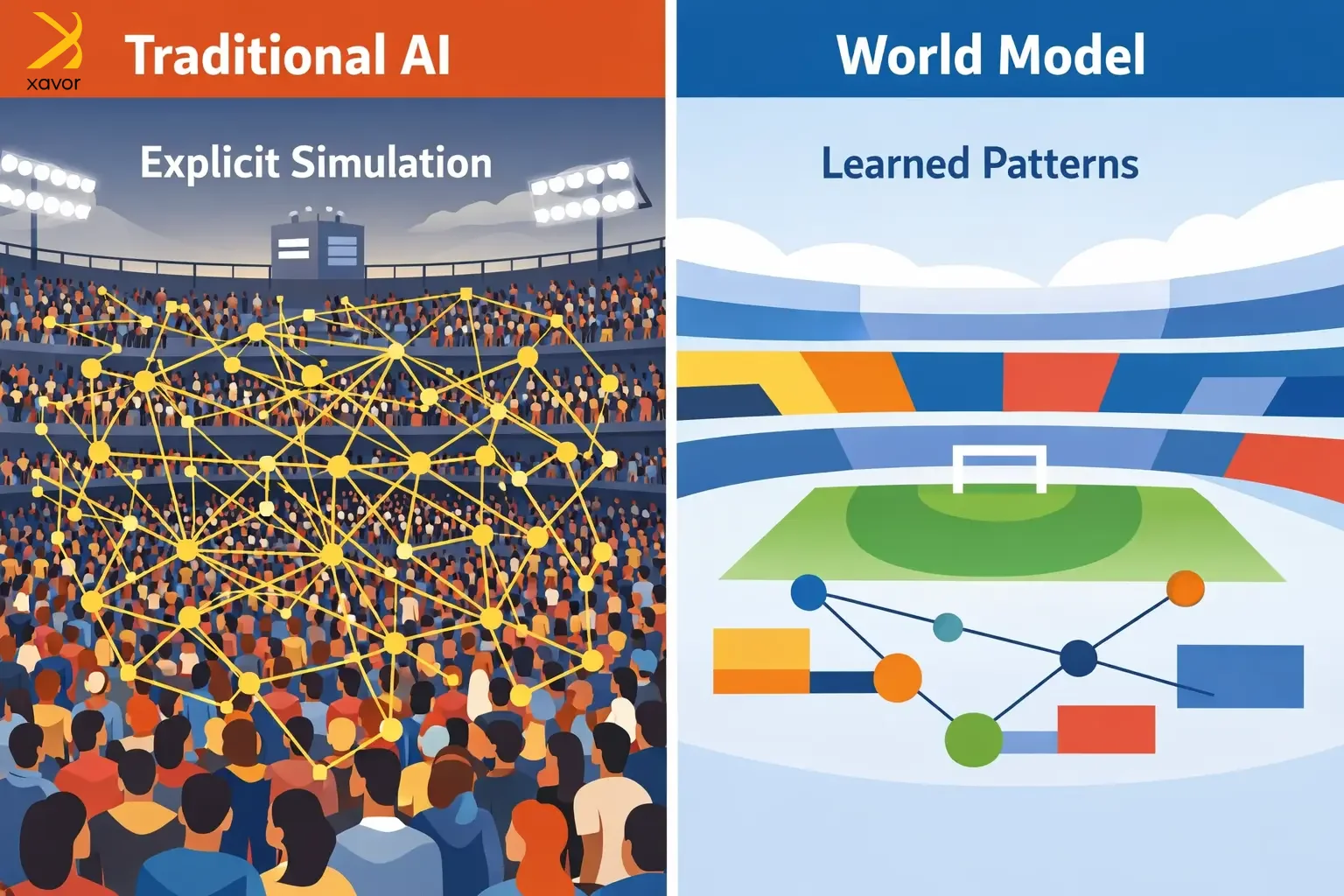

Imagine that you’re at the Super Bowl. Even though you may never have been there, you can perfectly create a model of the whole atmosphere. 80,000 something fans in variegated team colors, the crowd’s noise, beer, and nachos. It’s the quintessential American experience.

However, for traditional AI to simulate that, it needs explicit instructions for everything. Every fan. Every flag. Every interaction between every fan and every other fan. The more people, the more calculations. Nothing happens unless someone writes code for it.

Obviously, this approach is neither efficient nor feasible for creating physical AI products in real life. Like a healthcare robot needs to navigate the world’s infinite messiness. If a child runs unpredictably nearby, the robot needs to respond immediately.

The world won’t pause while it calculates. World models solve this predicament.

A world model learns from general patterns of how the world works, instead of being hand-programmed with every rule. Rather than calculating every detail from scratch, world models form an internal, compressed understanding of reality, such as:

- How objects move

- How forces and collisions work in a 3D space

In short, world models provide spatial awareness. That is why they are very promising for physical AI, embodied AI, and robotics. You know technologies that interact with the real world.

World models vs LLMs: What’s the difference?

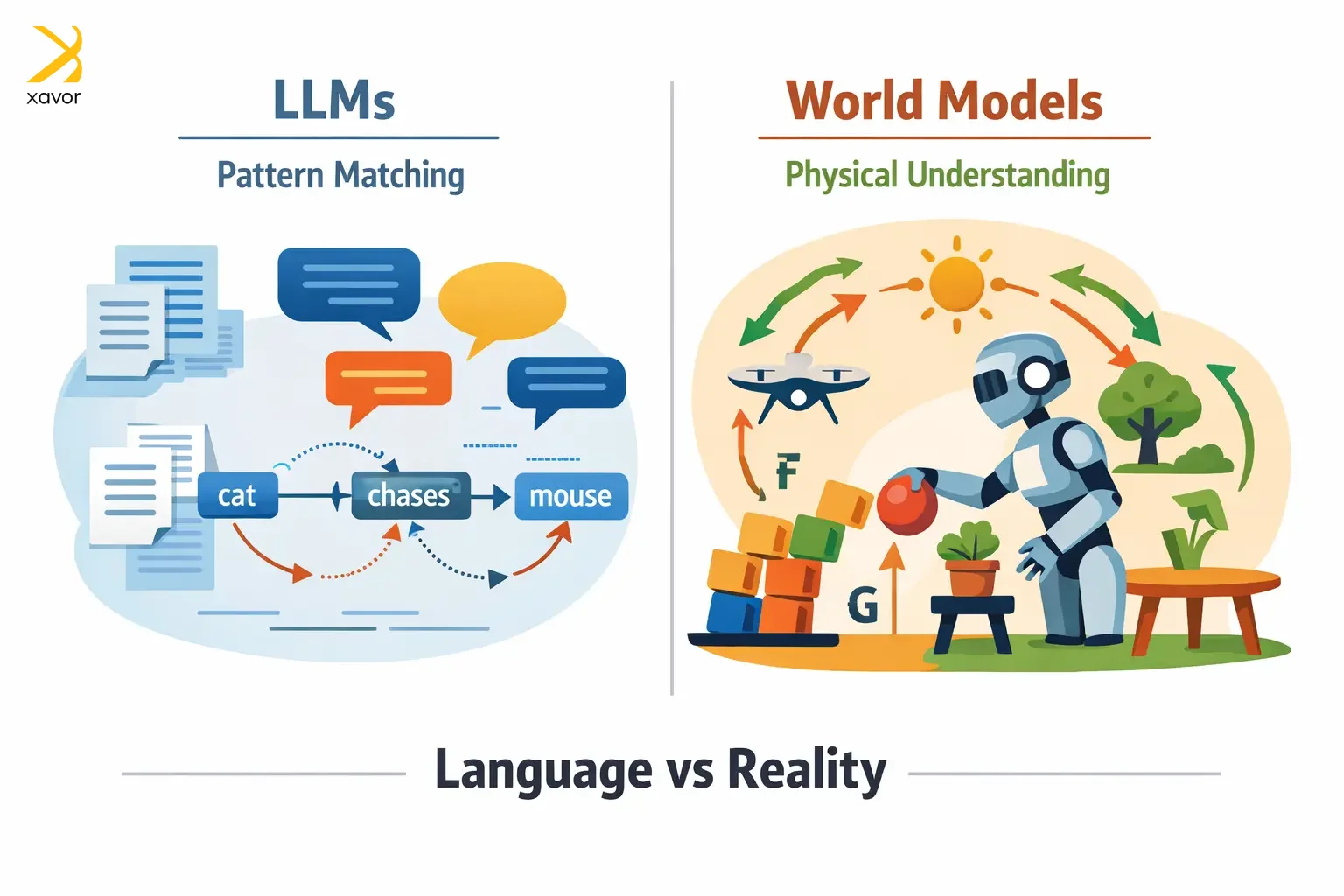

LLMs are the foundation of modern AI. But LLMs excel only at language, which is not enough to understand the real, physical world.

LLMs have some inherent limitations that make them pretty useless for physical AI:

- They only match patterns to answers that look like understanding, without any real comprehension underneath.

- LLMs have inherent security vulnerabilities. They can be manipulated into saying or doing things they shouldn’t.

- Teaching LLMs new things is difficult. They can break or forget previous information.

If you ask an LLM about gravity or thermodynamics, it will only make inferences based on lots of text where those ideas show up together. So, no matter how convincingly ChatGPT tells you about a real-world phenomenon, that knowledge is mostly pattern-based.

On the other hand, world models learn how reality works, not just what words follow other words. A world model takes in the kind of messy, multi-sensory input the real world actually throws at you. Therefore, it builds an internal sense of how the world behaves.

| Aspect | LLMs | World models |

| What they learn from | Mostly text like books, websites, articles | Data from interaction with the world using sensors, simulations, telemetry |

| Core design | Usually transformer models that focus on relationships between words/tokens | Usually mixed systems that encode observations and model how situations change over time |

| Main goal | Predict the next word or token | Predict what will happen next in an environment and help choose actions |

| How they are trained | Mostly self-supervised learning on large text datasets | Self-supervised learning, and often reinforcement learning when action and feedback matter |

| Best at | Language tasks like writing, summarizing, translating, answering questions | Tasks involving action and dynamics, like robotics, control, simulation, and model-based RL |

| Relation to the real world | Indirect: They know the world through descriptions in language | More direct: They learn from observations, interaction, and environmental feedback |

How are world models made, and how do they work?

Building a world model is expensive as of yet. And it is a slow process that requires a lot of data processing. The payoff, however, is a system that genuinely understands physical reality.

You need to feed them an enormous amount of real-world footage, like videos, images, and sensor data across every condition imaginable. Then neural networks with billions of parameters digest all of it to extract the underlying rules of how things move, how depth works, and how one action leads to another.

It costs millions of dollars in compute alone, before a single robot takes a step.

The working formula

World models work through three connected pieces.

1. Perception

First, it needs to see. Computer vision encoders are essentially the model’s eyes, translating raw sensory input into something the system can work with internally. Like how your eyes hand your brain a processed, compressed signal.

2. Memory

Then it needs to hold a sense of where things currently stand. Not just what it saw a moment ago, but the full context of the situation. This is the model’s running understanding of the present state of the world.

3. Prediction

Finally, and most crucially, it simulates what happens next. Given the current state of things and a possible action, a world model runs the scenario forward. Not by looking up an answer, but by having internalized enough about how reality works to generate a plausible future.

What are the possible use cases of world models?

World models are arguably the most important step towards artificial general intelligence (AGI). They are more important than chatbots, generative AI, and other branches of AI.

When a robot can simulate “what happens if I do this” before doing it, something fundamental shifts. It stops being a very sophisticated rule-follower and starts resembling something closer to an agent with judgment.

Self-driving cars

Autonomous vehicles are the most visible use cases of world models. They let developers generate endless synthetic scenarios without putting a single test car on a real road. The vehicle learns from situations that haven’t happened yet, which is exactly what safe autonomous driving requires.

Robotics

Similarly, robotics is where it gets quietly profound. Robots trained with world models can practice thousands of tasks in virtual simulation before touching anything real. What used to require expensive, risky real-world trials can now happen overnight in a simulator.

Video analytics

Video analytics is another less glamorous, but enormously practical application. World models can watch live and recorded video at scale and actually understand what’s happening.

Conclusion

World models, in essence, aren’t a new idea. As an idea, they have been floating around since forever. But their genesis really began with Craik’s monumental work, even before AI was coined as a term. But they are now truly in the mainstream scene.

Their comeback is due to the increasing integration of AI in our daily lives. It is imperative for AI systems to understand our physical world if we truly want to progress into an AI-driven future. But there are still many challenges to overcome.

Both hardware and software will have to rapidly become strong enough to mimic our mind’s intuition of the real world that we take for granted.

This piece was based on our first-hand experience working with the latest tech in physical AI. In our opinion, language and code aren’t enough to reach the final stage of physical AI. It is only possible through world models, and we are eagerly waiting to see how they evolve.

Contact us at [email protected] to talk to our physical AI experts if you want professional insights regarding your own projects.

FAQs

World models in AI are systems that learn how the real world works, like how objects move, interact, and change. Instead of just processing language, they build an internal simulation of reality to predict what will happen next and help make decisions.

World models are the next big thing in AI because they enable machines to understand and interact with the real world, not just process text. Predicting how environments evolve and how actions lead to outcomes can unlock breakthroughs in robotics, autonomous systems, and truly intelligent decision-making.

LLMs are trained on text and focus on predicting words, so they understand the world indirectly through language patterns. On the other hand, world models learn from real-world data and focus on predicting how environments change.