PLM systems keep information connected throughout the product development process. But most of them make it really difficult to find the information you’re looking for. You have to chase answers through rigid filters over and over again. Pick a part type, a supplier, maybe a change status, then stack some more filters to approximate your question.

On top of that, most of them don’t understand the hierarchies between PLM functions. Parent? Child? Nth-level BOMs are terms that their internal search facilities don’t process. So, you get answers that are totally off the mark. Sometimes PLM products give you superfluous details you didn’t ask for, or they don’t provide you with the things you were actually looking for.

And then the noise of real-world complexities makes information search even more difficult. Luckily, AI-enabled search in PLM solutions can help address many of these issues.

In this blog, we’ll explore how AI can help you find answers in vast amounts of PLM data quickly and accurately.

The problems with PLM search and findability

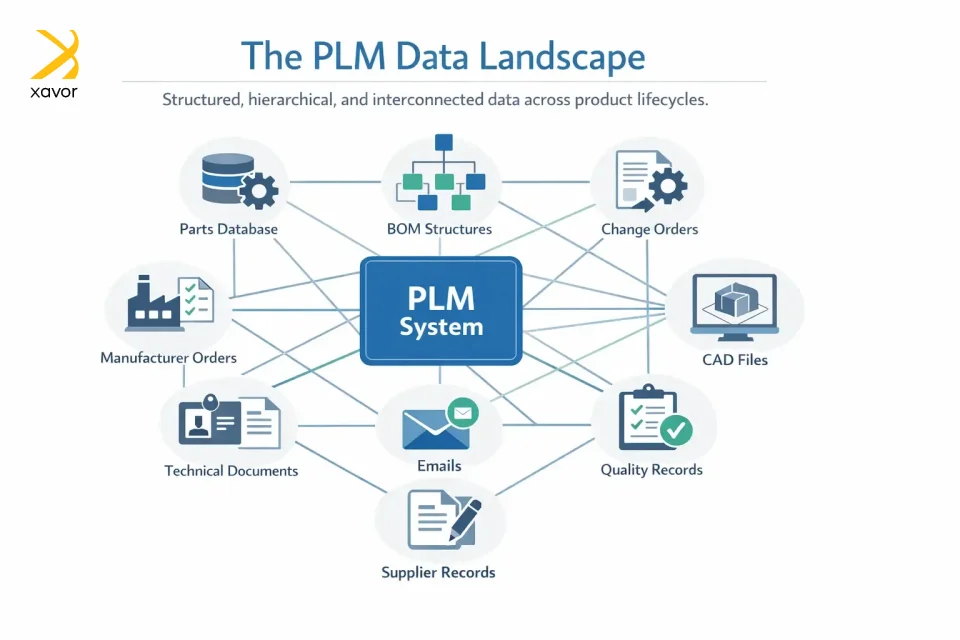

Searching in PLM is way different than Googling on the internet. A simple search operation in PLM is a complex web of computational and organizational tasks. Since PLM software deals with corporate data, they store tons of part numbers, documents, emails, and other types of information.

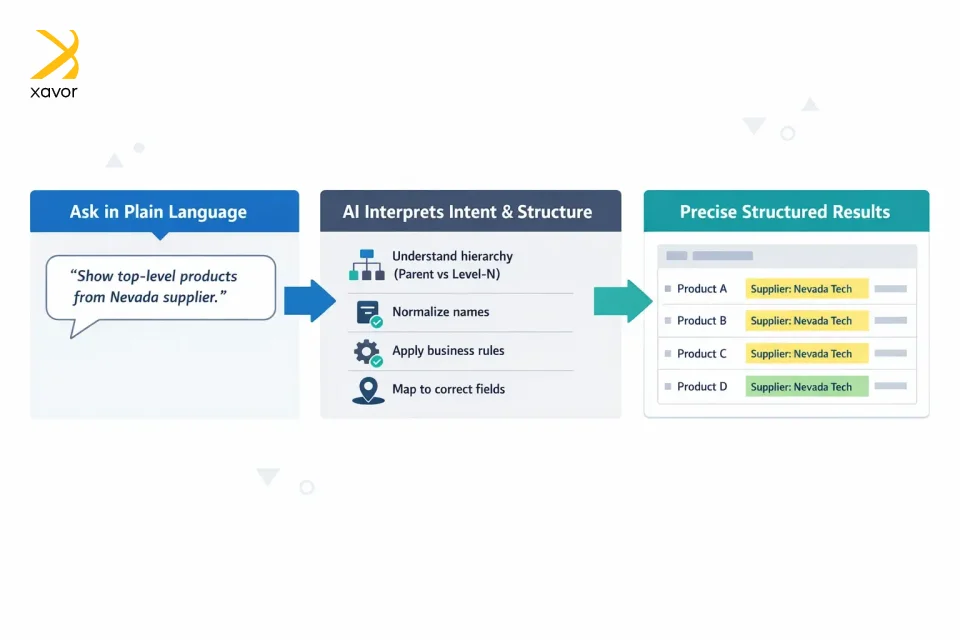

You can just ask your coworker to pass the files that contain parts from the Nevada supplier’s top-level products. But searching for that in PLM is a daunting task because you have to go through a maze of filters.

Moreover, many PLM users don’t know where to search. In the last few years, PLM vendors have worked to make data retrieval easy and hassle-free. And one approach that has gained popularity is the use of AI search assistants.

AI simplifies navigating the data jungle

AI can make PLM search feel less like keyword hunting and more like asking for what you mean. Instead of only matching the exact words you type, an AI model can try to understand your intent. What you’re actually looking for and it can also make sense of different kinds of content that get indexed.

In practice, that means you simply describe what you need in plain language. An AI layer translates your intent into precise, structure-aware, search-engine-specific queries behind the scenes. It understands hierarchy, respects business rules, and normalizes messy names so minor spelling/casing differences don’t block results.

You can ask for change orders, parts, manufacturer orders, and BOM-specific views in the same fluent way. All without learning syntax or clicking through a maze of dropdowns.

That makes search feel more natural, especially for newer users who don’t yet know the company’s naming conventions or the right advanced filters. And when people can reliably find existing parts, documents, or designs, they’re less likely to recreate work that already exists, reducing duplicate effort.

Why is this important

As industries are becoming more digital and more systems connect, AI is likely to play a major role in design workflows. Early implementations like this are basically using AI as an assistant layer between people and complex data systems. It will help humans get the value out of the data without needing to speak the system’s exact language. Over time, that assistant role could enable tighter collaboration between humans and AI, where each does what it’s best at. Humans provide goals and judgment, and AI handles discovery, connections, and scale.

- One natural request → the right scope. The AI interprets position in the structure, like parent item vs. level-N component vs. any depth, and applies it directly in the search engine retrieval logic.

- Reliable field understanding. It distinguishes similarly named fields and pulls the value from the correct layer, so status isn’t confused with lifecycle, and the change number is taken from the authoritative place.

- BOM intelligence. You can target nth-level BOM, last-level components, or multi-level rollups. The system returns results with context, so you immediately see why an item was included.

- Stronger recall, cleaner precision. The AI expands synonyms and normalizes supplier and organization names while still keeping your constraints tight.

- Faster refinement. Follow-ups like only production-ready, inactive suppliers only, or limit to this category are understood in natural language and re-applied without rebuilding a filter stack.

Under the hood, the search engine still does fast, scalable search and ranking, while the AI supplies the understanding layer that the filter UI can’t. You get consistent answers to complex, nested questions about change orders, parts, and manufacturer orders, plus deep BOM navigation. The net result is fewer clicks, fewer blind spots, and far more confidence that the results match exactly what you asked for.

How the AI-based search flow works

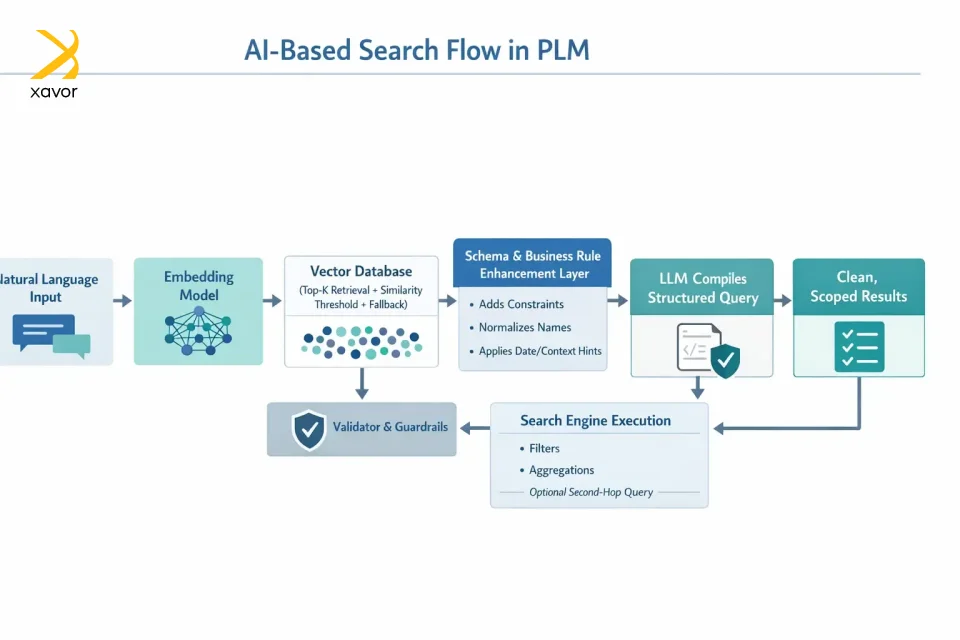

The flow starts with natural-language input. That input is embedded and compared in a vector database to retrieve the top-K similar examples, which is guarded by a similarity threshold and a fallback when matches are weak. The retrieved exemplars pass through a lightweight enhancement step that blends schema knowledge and business rules, such as:

- Adds constraints

- Normalizes names

- Applies date/context hints.

The output of this step is a concise, structured instruction that captures your intent in a way a model can reliably follow. The instruction is then compiled by the LLM model into a strict, search engine-specific query. Before anything runs, a validator checks syntax and guardrails. The search engine executes the query. It supports filters, aggregations, and, when a request spans multiple entities, an optional second hop. Finally, you get clean, scoped results in return.

Throughout, the vector store anchors each request to proven patterns for consistency, and it can be expanded over time with fresh examples to further improve accuracy and stability.

Reflections on existing PLM findability solutions

PLM systems already include some form of enterprise search, usually powered by common search technologies. But that search is mostly limited to data that lives inside the PLM world. You know things stored in, or directly referenced by, the PLM database. There are a few exceptions, but in general, the search doesn’t truly span the full product universe.

Data discovery tools exist, too, but they’re often narrow in scope. And most of them target IoT or operational data, which is only a fraction of corporate PLM data.

Products are increasingly seen as end-to-end experiences, where customer outcomes matter as much as features. That forces PLM to deal with new kinds of data it historically didn’t manage well. Even customer signals and usage behavior are now important. Therefore, as product data is spread across many systems, PLM can’t stay isolated.

AI-based search is a practical way forward

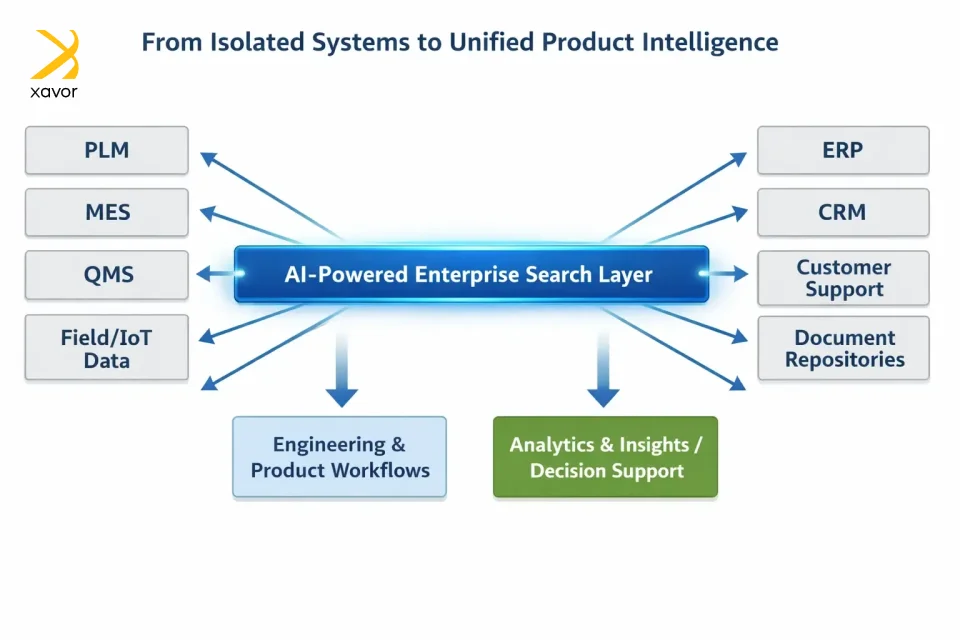

Implementing AI search assistants makes product information discoverable across disconnected systems and silos. The goal at this stage is to make it easy to find the right product data wherever it lives.

Once the data is findable, AI can also focus on making it reliable and connected through data governance. That means cleaning up definitions, ownership, quality, and consistency so the organization can build true digital threads and digital twins on a trustworthy foundation.

The core market need, right now, is a strong enterprise search layer that can pull product information from across the enterprise and feed it into two key destinations.

Finally, we can also add data discovery on top to bring performance and behavioral insight into the loop. To answer questions, like how customers respond, how products perform in the field, and what simulations or what-if analyses suggest.

Conclusion

PLM has really stood the test of time. Time and time again, we heard voices that PLM is getting obsolete, that it will be replaced by data-centric methods. And some even downgraded it as a subset of systems engineering. But PLM has only become more critical and advanced over the past few years.

And the next wave of digitizing is all about AI. Therefore, PLM systems must remain on top of AI functionalities to maintain their relevance. AI-based PLM search moves you from tedious filter-hunting to asking the exact question you care about. The system handles the hierarchy and the nuance, returning results that match your intent.

As a result, teams get faster answers, fewer blind spots, and far more confidence that the output is correct. And they do it without learning query languages or wrestling with rigid UI constraints.

Xavor has been in the PLM space for the past 15+ years. We have worked with Oracle Agile, Aras Innovator, and Propel to deliver to hundreds of clients in various industries. If you want to organize your PLM workflows on modern standards, drop us a line at [email protected] to book a free consultation session.

FAQs

AI in PLM is mainly used to improve search, automate classification, detect duplicate parts, analyze BOMs, and surface risks in change management. It helps users find information faster and make better lifecycle decisions using large volumes of engineering data.

Yes. AI can analyze geometry, metadata, and naming patterns to identify similar or duplicate parts across the system. This reduces redundant designs, lowers material costs, and improves standardization across products.

AI helps connect data across PLM, ERP, MES, and IoT systems by understanding relationships and context. It makes lifecycle traceability easier and enables insights into product performance, quality issues, and change impact across the entire lifecycle.